Beyond Benchmarks: Why Every Field Needs Reproducibility Infrastructure

- Incepta Labs Team

- Mar 20

- 3 min read

The Real Bottleneck Isn’t Generation; It’s Verification

Across science, AI, and knowledge systems, we are entering a new phase:

We can generate ideas faster than we can determine whether they are true.

AI accelerates:

hypothesis generation, analysis, content creation, even proofs and models

But it does not solve: verification

This creates a growing gap between:

what appears correct

and what is independently reproducible

The Compression of Signal

This problem existed before AI.

Most systems reward: output, novelty, publication, engagement

Not:

validation

consistency

reproducibility

AI amplifies this dynamic.

When content becomes cheap:

signal becomes harder to identify.

Benchmarks Are Not Reality

Modern AI systems are primarily evaluated using benchmarks.

Benchmarks measure:

performance on curated datasets

accuracy under fixed conditions

single-run outputs

They answer:

Can this system perform well once?

They do not answer:

Will this result hold across independent executions, environments, and conditions?

In practice:

models drift

environments vary

pipelines are non-deterministic

A system can:

score highly on a benchmark

and still fail to reproduce its own outputs

The Missing Layer: Reproducibility as the Unit of Value

Across domains, one principle holds:

Truth is not what is produced once ; it is what remains true under independent verification.

Yet most systems do not assign value based on that.

A more robust framework would:

require independent replication or verification

record outcomes across contexts

condition rewards on reproducibility

scale value based on consistency

This shifts the unit of value from:

outputs → verified, reproducible results

A Cross-Domain Problem (Non-Limiting Examples)

This is not specific to one field.

It appears across at least five domains:

1. Science / Bench Research

experimental results fail to replicate

methods omit execution detail

tacit knowledge is not captured

2. Artificial Intelligence / Machine Learning

outputs vary across environments

pipelines are difficult to reproduce

benchmarks fail to capture real-world behavior

3. Mathematics

proofs are increasingly complex

AI systems generate conjectures and proofs

independent verification becomes the bottleneck

4. Physics (Theoretical and Computational)

models may be internally consistent but untested

simulations depend on assumptions

validation is limited

5. Social Sciences

low replication rates

publication bias

statistical fragility

In some cases, the reproducibility problem is not an exception — it is systemic.

Why Reproducibility Fails

Across these domains, a consistent issue emerges:

Systems reward outputs, not validation.

And critically:

The full method is rarely captured.

In many cases:

execution details are missing

environmental conditions are not recorded

tacit knowledge remains implicit

A New Framework: Structured Reproducibility

A reproducibility-based system would:

Allow results to be submitted

Enable independent verification or replication

Record execution conditions and outcomes

Aggregate results across attempts

Assign value based on reproducibility

This creates a new structure:

Instead of:

isolated papers

disconnected replication attempts

You get:

structured reproducibility records

Example:

Result X: - Replications: 12 - Success rate: 75% - Failure conditions: Y, Z - Method versions: v1.0 → v1.2

Reproducibility Is Not Binary

One of the most important insights:

Reproducibility is not pass/fail — it is a process.

Today:

replication fails

authors respond

disagreements remain unresolved

In a structured system:

replication attempts are recorded

authors provide feedback

methods are updated

outcomes improve over time

This becomes:

method versioning + validation

Method v1.0 → 40% success Method v1.1 → 85% success Method v1.2 → 95% success

Disagreement becomes data. Iteration becomes measurable.

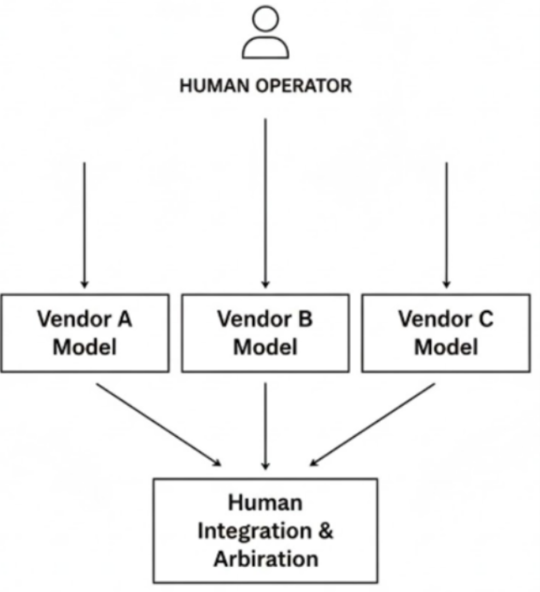

AI as a Special Case

AI systems make this problem more urgent.

Today’s AI evaluation focuses on:

benchmarks

preference ranking

single-run outputs

But real-world systems require:

consistency across runs

stability across environments

reproducibility of outputs

This introduces a new standard:

AI systems should not only perform well — they should perform reliably under independent execution.

A reproducibility-based framework enables:

re-execution across environments

tracking of model and prompt versions

consistency validation

identification of drift and instability

Why this matters

In high-stakes domains:

healthcare

pharmaceuticals

regulatory AI

Benchmarks are insufficient.

Reproducibility becomes the foundation of trust.

A System That Improves When Used

Unlike many systems:

attempts to optimize within this framework improve it.

To succeed, participants must:

create methods that others can reproduce

clarify execution

reduce ambiguity

This leads to:

better methods

more reliable results

higher signal

The Bigger Shift

Across all domains:

generation is scaling

verification is not

This creates a new bottleneck:

determining what is actually true

Different fields define truth differently:

experiments

computations

proofs

models

But they all share the same gap:

There is no system that consistently rewards verified truth.

⚡ Final Line

The next generation of AI and scientific infrastructure will not be defined by what systems can generate — but by what they can independently verify and reproduce.

Provisional patents filed. Authorized disclosure under Averitas Holdings LLC. Pending sublicense to Incepta Labs. Original inventor and owner: Dr. Melinda B. Chu.

Priority claims trace to Nov 2023 → June 2024 → Aug 2024 → May 2025 patent families and past and active records.

Documented in HedyNova and in the Human Conception Ledger™

Comments