AI Hallucinations and the Role of Human Verification and Mitigation

- Incepta Labs Team

- Mar 9

- 2 min read

Large language models have introduced powerful new tools for analyzing information, generating text, and assisting complex workflows.

Alongside these capabilities, discussions about “AI hallucinations” have become common. Hallucinations occur when a model produces outputs that appear plausible but contain incorrect or fabricated details.

These behaviors are well understood within the research community. Language models generate responses based on statistical patterns in training data rather than direct access to a verified knowledge base.

As a result, occasional errors are not surprising.

Importantly, hallucinations are not fundamentally different from errors that occur in many other forms of information processing.

Humans also make mistakes when recalling facts, summarizing information, or interpreting complex material. In both cases, verification and review remain important parts of the workflow.

For this reason, many practical uses of AI treat language models as assistive tools rather than autonomous authorities.

In research, legal analysis, software development, and other technical domains, AI systems can help summarize information, propose ideas, or draft documents. However, final verification of citations, references, and conclusions remains the responsibility of human users.

This relationship is similar to many other professional tools. Calculators assist with arithmetic, but humans still review financial results. Statistical software assists with analysis, but researchers verify their interpretations.

Artificial intelligence can dramatically accelerate many tasks, but it does not eliminate the need for judgment and validation.

An important area of ongoing development involves building systems that make verification easier and more reliable.

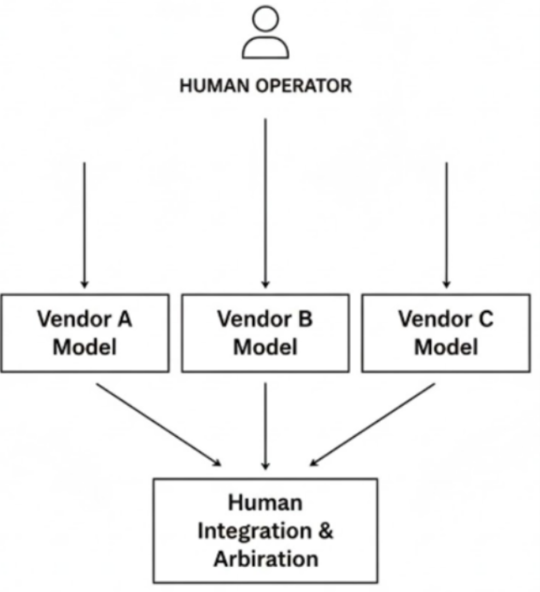

These systems may include structured workflows, multi-model validation, and software tools designed to assist with citation checking and information provenance.

The goal is not to eliminate human involvement, but to create workflows where AI accelerates analysis while humans maintain oversight and responsibility.

As AI systems continue to improve, hallucination rates will likely decline. However, verification will remain an essential component of reliable workflows.

Rather than treating hallucinations as a reason to abandon AI tools, it may be more productive to view them as an engineering problem that can be mitigated through better infrastructure and thoughtful system design.

Comments